If you don’t mind using a gibberish .xyz domain, why not an 1.111B class? ([6-9 digits].xyz for $0.99/year)

If you don’t mind using a gibberish .xyz domain, why not an 1.111B class? ([6-9 digits].xyz for $0.99/year)

Any chance you’ve defined the new networks as “internal”? (using docker network create --internal on the CLI or internal: true in your docker-compose.yaml).

Because the symptoms you’re describing (no connectivity to stuff outside the new network, including the wider Internet) sound exactly like you did, but didn’t realize what that option does…

It also means that ALL traffic incoming on a specific port of that VPS can only go to exactly ONE private wireguard peer. You could avoid both of these issues by having the reverse proxy on the VPS (which is why cloudflare works the way it does), but I prefer my https endpoint to be on my own trusted hardware.

For TLS-based protocols like HTTPS you can run a reverse proxy on the VPS that only looks at the SNI (server name indication) which does not require the private key to be present on the VPS. That way you can run all your HTTPS endpoints on the same port without issue even if the backend server depends on the host name.

This StackOverflow thread shows how to set that up for a few different reverse proxies.

And MATLAB appears to produce 51, wtf idk

The numeric value of the ‘1’ character (the ASCII code / Unicode code point representing the digit) is 49. Add 2 to it and you get 51.

C (and several related languages) will do the same if you evaluate '1' + 2.

Fun fact: apparently on x86 just MOV all by itself is Turing-complete, without even using it to produce self-modifying code (paper, C compiler).

If there happens to be some mental TLS handshake RCE that comes up, chances are they are all using the same underlying TLS library so all will be susceptible…

Among common reverse proxies, I know of at least two underlying TLS stacks being used:

crypto/tls from the Go standard library (which has its own implementation, it’s not just a wrapper around OpenSSL).

No standard abbreviation exists for nautical miles but definitely don’t use nm because newton metres

Since as you mentioned Newtons are N not n, Newton meters are Nm. nm means nanometer.

Aurora is no longer maintained, but it still works just fine. It’s a Windows app, so not web-accessible or anything, but it’s free. It only contains the SRD content by default (probably for legal reasons), but there’s at least one publicly-accessible elements repository for it that you can find using your favorite search engine.

That domain currently hosts a “this domain may be for sale” page, but it’s been registered since 2005 so it’s definitely not because of this post.

Additionally, HTTPS if very easy to set up nowadays and the certificates are free1.

1: Assuming you have a public domain name, but for ActivityPub that’s already a requirement due to the push nature of the protocol.

There are FOSS licenses (notably the GPL) that say that if you do resell (or otherwise redistribute) the software, you have to do so only under the same terms. (That is, you can’t sell a proprietary fork. But you could sell a fork under FOSS terms.) But none that say “no selling.”

For many companies (especially large ones), the GPL and similar copyleft licenses may as well mean “no selling”, because they won’t go near it for code that’s incorporated in their own software products. Which is why some projects have such a license but with a “or pay us to get a commercial license” alternative.

AFAIK docker-compose only puts the container names in DNS for other containers in the same stack (or in the same configured network, if applicable), not for the host system and not for other systems on the local LAN.

I have a similar setup.

Getting the DNS to return the right addresses is easy enough: you just set your records for subdomain * instead a specific subdomain, and then any subdomain that’s not explicitly configured will default to using the records for *.

Assuming you want to use Let’s Encrypt (or another ACME CA) you’ll probably want to make sure you use an ACME client that supports your DNS provider’s API (or switch DNS provider to one that has an API your client supports). That way you can get wildcard TLS certificates (so individual subdomains won’t still leak via Certificate Transparency logs). Configure your ACME client to use the Let’s Encrypt staging server until you see a wildcard certificate on your domains.

Some other stuff you’ll probably want:

I believe on the free ARM instances you get 1Gbps per core (I’ve achieved over 2Gbps on my 4-core instance, which was probably limited by the other side of the connections). What you say may be correct for the AMD instances though.

If you’re using OpenSSH, the IdentityFile configuration directive selects the SSH key to use.

Add something like this to your SSH config file (~/.ssh/config):

Host github.com

IdentityFile ~/.ssh/github_rsa

Host gitlab.com

IdentityFile ~/.ssh/gitlab_rsa

This will use the github_rsa key for repositories hosted at github.com, and the gitlab_rsa key for repositories hosted at gitlab.com. Adjust as needed for your key names and hosts, obviously.

It’s possible your e-mail account was compromised, and that’s how they were able to click that confirmation link you ignored. Change your e-mail password.

I tried it on Linux Mint and I’m directed to FlatHub, which states:

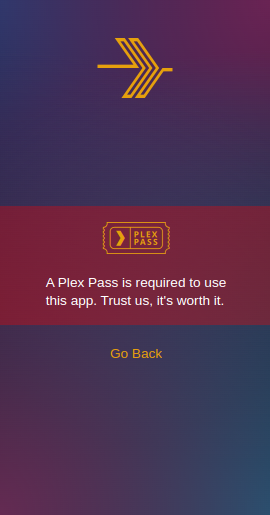

★★ You’ll need a Plex Media Server and an active Plex Pass to use this app ★★

Installed it anyway, but:

I guess they didn’t update that version yet?

Edit ~24 hours later: I just got the update. It works now.

Edit 2: … but media keys don’t seem to work :(

Someone already made one 3 hours ago. Though apparently it won’t help by itself, since their robots.txt disallows indexing anyway (and that same issue also requests that to be adjusted).

According to their develop pages, they do look for that:

There are a handful of factors that play a role in canonicalization: […], and

rel="canonical"linkannotations.

(but Google considers it a hint, so they don’t have to honor it)

Also, that change was just for Lemmy. Other Fediverse sites may not do the same, which would lessen the effect. For example, from a quick look at a random federated post on kbin.social, there was no such <link rel="canonical"/> element present in the page source.

No idea about the Lemmy hosting bit, but I highly doubt that .com you got will renew at $1 going forward. Judging by this list it’ll most likely be $9+ after the first year.

At $1/year, the registrar you used is taking a loss because they pay more than that to the registry for it. They might be fine with that for the first year to get you in the door, but they’d presumably prefer to be profitable in the long term.