Someone just needs to make a GPU-accelerated JSON decoder

Rockstar making GTA online be like: “Computer, here is a 512mb json file please download it from the server and then do nothing with it”

Everybody gangsta still we invent hardware accelerated JSON parsing

You mean like simdjson?

No. Verlilogjson.

There is acceleration for text processing in AVX iirc

https://ieeexplore.ieee.org/document/9912040 “Hardware Accelerator for JSON Parsing, Querying and Schema Validation” “we can parse and query JSON data at 106 Gbps”

106 Gbps

They get to this result on 0.6 MB of data (paper, page 5)

They even say:

Moreover, there is no need to evaluate our design with datasets larger than the ones we have used; we achieve steady state performance with our datasets

This requires an explanation. I do see the need - if you promise 100Gbps you need to process at least a few Tbs.

Imagine you have a car powered by a nuclear reactor with enough fuel to last 100 years and a stable output of energy. Then you put it on a 5 mile road that is comprised of the same 250 small segments in various configurations, but you know for a fact that starts and ends at the same elevation. You also know that this car gains exactly as much performance going downhill as it loses going uphill.

You set the car driving and determine that, it takes 15 minutes to travel 5 miles. You reconfigure the road, same rules, and do it again. Same result, 15 minutes. You do this again and again and again and always get 15 minutes.

Do you need to test the car on a 20 mile road of the same configuration to know that it goes 20mph?

JSON is a text-based, uncompressed format. It has very strict rules and a limited number of data types and structures. Further, it cannot contain computational logic on it’s own. The contents can interpreted after being read to extract logic, but the JSON itself cannot change it’s own computational complexity. As such, it’s simple to express every possible form and complexity a JSON object can take within just 0.6 MB of data. And once they know they can process that file in however-the-fuck-many microseconds, they can extrapolate to Gbps from there

That’s why le mans exist, to show that 100m races with muscle cars are a farce

Based on your analogue they drive the car for 7.5 inches (614.4 Kb by 63360 inches by 20 divided by 103179878.4 Kb) and promise based on that that car travels 20mph which might be true, yes, but the scale disproportion is too considerable to not require tests. This is not maths, this is a real physical device - how would it would behave on larger real data remains to be seen.

I’m so impressed that this is a thing

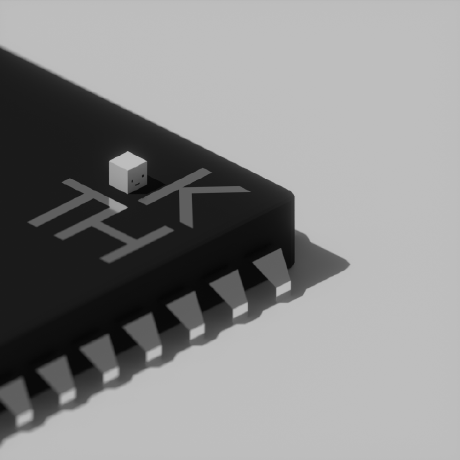

Coming soon, JSPU

I have the same problem with XML too. Notepad++ has a plugin that can format a 50MB XML file in a few seconds. But my current client won’t allow plugins installed. So I have to use VS Code, which chokes on anything bigger than what I could do myself manually if I was determined.

Time to train an LLM to format XML and hope for the best

Do we need a “don’t parse xml with LLM” copypasta?

L arge L regex M odelI don’t wish death on anyone.

Wait, it’s all regex?

Always has been

Meanwhile, I can open a 1GB file in (stock) vim without any trouble at all.

Formatting is what

xmllintis for.Just install python and format it. Then

I use vim macros. You can do some crazy formatting with it

You don’t need to open a file in a text editor to format it

Render the json as polygons?

That just results in an image of JSON Bourne.

It’s time someone wrote a JSON shader.

Ray TraSON

Rayson

That is sometime the issue when your code editor is a disguised web browser 😅

No, if you’re struggling to load 4.2 mb of text the issue is not electron.

You jest, but i asked for a similar (but much simpler) vector / polygon model, and it generated it.

Maybe it’s time we invent JPUs (json processing units) to equalize the playing field.

Until then, we have simdjson https://github.com/simdjson/simdjson

Latest Nvidia co-processor can perform 60 million curly brace instructions a second.

Finally, something to process “databases” that ditched excel for json!

60 million CLOPS? No way!

The best I can do is an ML model running on an NPU that parses JSON in subtly wrong and impossible to debug ways

You need to make sure to remove excess whitespace from the JSON to speed up parsing. Have an AI read the JSON as plaintext and convert it to a handwriting-style image, then another one to use OCR to convert it back to text. Trailing whitespace will be removed.

Did you know? By indiscriminately removing every 3rd letter, you can ethically decrease input size by up to 33%!

Just make it a LJM (Large JSON Model) capable of predicting the next JSON token from the previous JSON tokens and you would have massive savings in file storagre and network traffic from not having to store and transmit full JSON documents all in exchange for an “acceptable” error rate.

Hardware accelerated JSON Markov chain operations when?

So you’re saying it’s already feature complete with most json libraries out there?

JSON and the Argonaut RISC processors

Given it is a CPU is limiting the parsing of the file, I wonder how a GPU-based editor like Zed would handle it.

Been wanting to test out the editor ever since it was partially open sourced but I am too lazy to get around doing it

That’s not how this works, GPUs are fast because the kind of work they do is embarrassingly parallel and they have hundreds of cores. Loading a json file is not something that can be trivially parallelized. Also, zed use the gpu for rendering, not reading files.

I’d like to point out for those who aren’t in the weeds of silicon architecture, ‘embarrassingly parellel’ is the a type of computation work flow. It’s just named that because the solution was an embarrassingly easy one.

Huh, I was about to correct you on the use of embarrassment in that the intent was to mean a large amount, but it seems a Wiki edit reverted it to your meaning a year ago, thanks for making me check!

As far as my understanding goes, Zed uses the GPU only for rendering things on screen. And from what I’ve heard, most editors do that. I don’t understand why Zed uses that as a key marketing point

To appeal to people who don’t really understand how stuff works but think GPU is AI and fast

i hate to break it to you bud but all modern editors are GPU based

Works fine in

vimExcept if it’s a single line file, only god can help you then. (Or running

prettier -won it before opening it or whatever.):syntax offand it works just fine.cat file.json | jqalso worksRender Media works the best

rm file.jsonYes, Render Media is the best. It’s hard to believe that not many people know about this tool. It’s also natively installed in all Linux distros.

https://porkmail.org/era/unix/award#cat

jq < file.jsoncatis for concatenating multiple files, not redirecting single files.

4.2 megs on one line? Vim probably can handle it fine, although syntax won’t be highlighted past a certain point

I’ve accidentally opened enormous single line json files more than once. Could be lsp config or treesitter or any number of things but trying to do any operations after opening such a file is not a good time.

Technically every JSON file is a single line, with line break characters here and there

The obvious solution is parsing jsons with gpus? maybe not…

Fifty million polygons processed by over 7 thousand processing cores (Intel iGPU), versus 4 million tokens processed by a single execution unit (with some instruction reordering tricky).

yea we need multithreaded json parsers

CUDA accelerated JSON parser is sorely needed

Doesn’t a 3070 have less than 7k cores? A UHD 750 (relatively recent iGPU) only has 256.

And I don’t know the structure of JSON that well, but can’t tokens be made of multiple chars?

You’re right, I looked up the highest Intel GPU count but forgot that they released desktop cards. Intel iGPUs “only” have 768 cores, it’s the Ampere cards that have thousands of cores.

JSON is UTF-8 so it can be up to three bytes per token theoretically. Depends on the language you’re processing, I guess.

C++ vs JavaScript

it’s more like gpu vs CPU

I’ve never had any problems with 4,2 MB (and bigger) json files. What languages/libraries/editors chokes on it?

Notepad

Reject MB, embrace MiB.

You’ve got them confused, MiB is the one misusing metric

It isn’t misusing metric, it just simply isn’t metric at all.

Reject MiB, call it “MB” like it originally was.

If you’re not aware, it was called MB because of JADEC when IEC units weren’t invented. IEC units were introduced because they remove the double meaning of JADEC units — decimal and binary. IEC units only carry the binary meaning, hence why they’re superior. If you convert 1000 kB to 1 MB then use MB, but on case of 1024 KiB to 1 MiB you should be using MiB. It’s all about getting the point across and JADEC units aren’t good at it.

I’m failing to understand why we would need decimal units at all. Whats the point of them? And why do the original units havr to change name to something as ridiculous as “Gibibyte” while the unnecessary decimal units get the binary’s old name?

You poor innocent soul… I can try to explain why decimal is even mentioned, but it would probably take a lot of time, and I’m not sure if I will be able to clarify things up.

I can at least say this: 2 TB HDD drive is indeed 2*10^12 B, but suddenly shindow$ in its File Explorer will show you that in fact the drive is only 1.82 TB. But WHY? Everyone asks, feeling scammed. Because HDD spec uses decimal units (SI; MB) and Window$ uses binary units (JEDEC; MB), i.e., 1.82 TiB (IEC; MiB). And macOS also uses JEDEC units, AFAIK.

More and more FOSS software uses IEC units and KDE Plasma is a good example: file manager, package manager etc. uses IEC units. Simply put, JEDEC added the binary meaning to decimal units, so at first MB (and now) only carried decimal meaning (until JEDEC shit out their standard). And the only reason why “gibibyte” is ridiculous, is because we all grew up with JEDEC interpretation of SI units. So it will take many generations for everyone to adapt xxbityte words into daily conversations. I’m (already) doing my part. It’s just the legacy that we have to deal with.

All international bodies (BIPM, NIST, EU) agree that the SI prefixes “refer strictly to powers of 10” and that the binary definitions “should not be used” for them.

https://en.wikipedia.org/wiki/Binary_prefix#IEC_1999_Standard

https://en.wikipedia.org/wiki/Binary_prefix#Other_standards_bodies_and_organizations

https://en.wikipedia.org/wiki/JEDEC_memory_standards#JEDEC_Standard_100B.01

Well, thank you for taking the time to write this detailed explanation!

Windows and MacOS use the abbriviation “MB” referring to the binary units, correct? How come that these big OS’s use another unit than these large international bodies recognize?

On a side note, I’ve always found it weird why HDDs or SSDs are/were sold with 128GB, 265GB, 512GB etc. when they are referring to decimal units.

Windows and MacOS use the abbriviation “MB” referring to the binary units, correct?

Yez. I’m only sure about the first one, but didn’t test myself whether the macOS is using power of 2 or 10 under the hood (of MB). You can open properties of something big and try converting raw number of bytes with

/1024^nand/1000^nand compare the end results.How come that these big OS’s use another unit than these large international bodies recognize?

Legacy, legacy everywhere (IMO). And of course they don’t want to confuse their precious users that don’t know any better. And this also would break some scripts that rely on that specific output. GNU C library also uses JEDEC units by default, hence flatpak and other software.

On a side note, I’ve always found it weird why HDDs or SSDs are/were sold with 128GB, 265GB, 512GB etc. when they are referring to decimal units.

It is weird for everyone, because we mainly only count with multiples of 2 when it comes to digital size of information. I didn’t investigate why they use power of 10, but I’ve seen that some other hardware also uses decimal units (I think at least in RAM, but JEDEC is used intentionally or not for CPU cache memory). I had a link where the RAM thingy is lightly addressed, but I couldn’t find it.

spoiler

P.S. it’s “OSes” and “macOS” BTW.

Maybe people would listen to you if you werent such a prick

Ok, show me what I did wrong and what should I do instead to not be a prick, please.

Dont start a comment with ‘you poor innocent soul’