Ya, fuck legacy aggrid

This is where

printfdebugging really shines, ironically.Honestly, this is why I tell developers that work with/for me to build in logging, day one. Not only will you always have clarity in every environment, but you won’t run into cases where adding logging later makes races/deadlocks “go away mysteriously.” A lot of the time, attaching a debugger to stuff in production isn’t going to fly, so truly “printf debugging” like this is truly your best bet.

To truly do this right, look into logging modules/libraries that support filtering, lazy evaluation, contexts, and JSON output for perfect SEIM compatibility (enterprise stuff like Splunk or ELK).

I once had a racing condition that got tipped over by the debugger. So similar behavior to what’s in the meme, but the code started working once I put in the

printcalls as well. I think I ended up just leaving the print calls, because I suck at async programmingYeah, I was going to mention race conditions as soon as I saw the parent comment. Though I’d guess most cases where the debugger “fixes” the issue while print statements don’t are also race conditions, just the race isn’t tight enough that that extra IO time changes the result.

Best way to be thorough with concurrency testing IMO involves using synchronization to deliberately check the results of each potential race going either way. Of course, this is an exponential problem if you really want to be thorough (like some races could be based on thread 1 getting one specific instruction in between two specific instructions in thread 2, or maybe a race involves more than 2 threads, which would make it exponentially grow the exponential problem).

But a trick for print statement debugging race conditions is to keep your message short. Even better if you can just send a dword to some fast logger asynchronously (though be careful to not introduce more race conditions with this!).

This is one of the reasons why concurrency is hard even for those who understand it well.

Clearly you should just ship it with the debugger and call it a day

Exactly, who would put a rebugged version into production anyway?

For those of you who’ve never experienced the joy of PowerBuilder, this could often happen in their IDE due to debug mode actually altering the state of some variables.

More specifically, if you watched a variable or property then it would be initialised to a default value by the debugger if it didn’t already exist, so any errors that were happening due to null values/references would just magically stop.

Another fun one that made debugging difficult, “local” scoping is shared between multiple instances of the same event. So if you had, say, a mouse move event that fired ten times as the cursor transited a row and in that event you set something like

integer li_current_x = xposthe most recent assignment would quash the value ofli_current_xin every instance of that event that was currently executing.Fear kepts the bits in line

Just run your prod env in debug mode! Problem solved.

Lol my workplace ships Angular in debug mode. Don’t worry though, the whole page kills itself if a dubious third-party library detects the console is open. Very secure and not brittle at all!

Please send helpNow I’m curious how this detection would work.

I’ve seen some that activate an insane number of breakpoints, so that the page freezes when the dev tools open. Although Firefox let’s you disable breaking on breakpoints all together, so it only really stops those that don’t know what they’re doing.

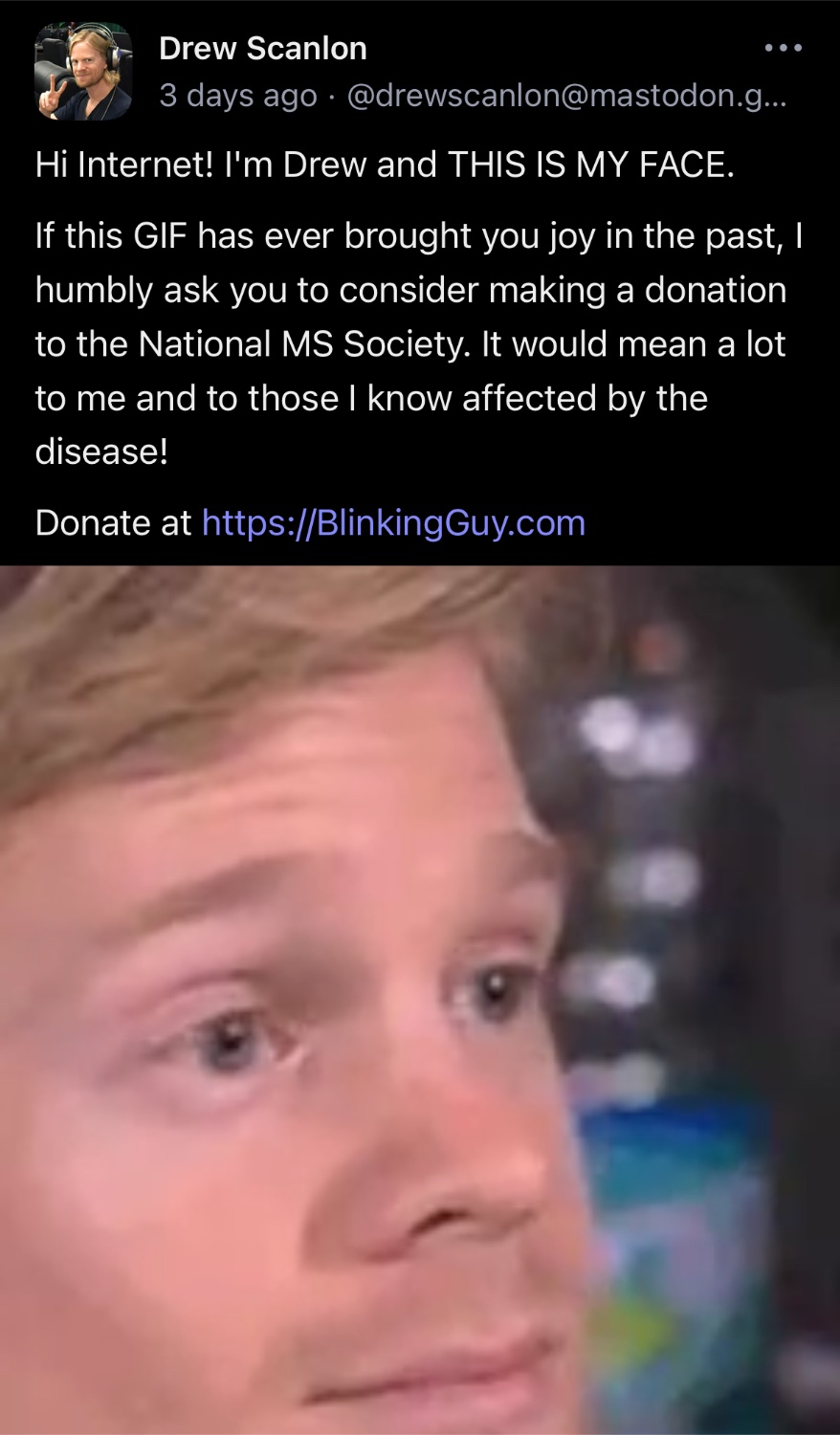

Blink-blink-blink. Blink. Blink. Blink. Blink-blink-blink.

No, I don’t have something in my eyes, I swear I’m fine looks nervously at boss.

Hang tight help is on the way.

You can imagine how many node projects there are running in production with

npm run. I have encountered js/ts/node devs that don’t even know that you should like, build your project, withnpm buildand then ship and serve the bundle.I just died a little inside. Thank you.

i have absolutely seen multiple projects on github that specifically tell you to do “npm run” as part of deploying it.

Heisenbugs are the worst. My condolences for being tasked with diagnosing one.

The most cryptic status code I’ve received is 403: OK, while the entire app fails to load

That means you’re not allowed in, and that’s OK 😂

Probably should be redirected to a login page or something though 😅

“Shhh, it’s okay”

When I write APIs I like to set endpoints to return all status codes this way no matter what you’re doing you can always be confident you’re getting the expected status code.

heisenbug

Behold, https://rr-project.org/

I found the solution, we’re running debug builds in prod from now on

Someone has a compiler if statement left somewhere in their code (… probably)

I always thought it was Cary Elwes.

He worked for the gaming site/podcast “Giantbomb” years ago. Pretty sure the image macro is pulled from one of their podcast videos.

Aren’t those almost always race condition bugs? The debugger slows execution, so the bug won’t appear when debugging.

sometimes it’s also bugs caused by optimizations.

And that’s where Release with debug symbols comes in. Definitely harder to track down what’s going on when it skips 10 lines of code in one step though. Usually my code ends up the other way though, because debug mode has extra assertions to catch things like uninitialized memory or access-after-free (I think specifically MSVC sets memory to

0xcdcdcdcdon free in debug mode).

I had one years ago with internet explorer that ended up being because “console.log” was not defined in that browser unless you had the console window open. That was fun to troublshoot.

Turned out that the bug ocurred randomly. The first tries I just had the “luck” that it only happened when the breakpoints were on.

Fixed it by now btw.bug ocurred randomly.

Fixed it by now btw.

someone’s not sharing the actual root cause.

I’m new to Go and wanted to copy some text-data from a stream into the outputstream of the HTTP response. I was copying the data to and from a []byte with a single Read() and Write() call and expexted everything to be copied as the buffer is always the size of the while data. Turns out Read() sometimes fills the whole buffer and sometimes don’t.

Now I’m using io.Copy().Note that this isn’t specific to Go. Reading from stream-like data, be it TCP connections, files or whatever always comes with the risk that not all data is present in the local buffer yet. The vast majority of read operations returns the number of bytes that could be read and you should call them in a loop. Same of write operations actually, if you’re writing to a stream-like object as the write buffers may be smaller than what you’re trying to write.

Ah yes… several years ago now I was working on a tool called Toxiproxy that (among other things) could slice up the stream chunks into many random small pieces before forwarding them along. It turned out to be very useful for testing applications for this kind of bug.

I’ve run into the same problem with an API server I wrote in rust. I noticed this bug 5 minutes before a demo and panicked, but fixed it with a 1 second sleep. Eventually, I implemented a more permanent fix by changing the simplistic io calls to ones better designed for streams

The actual recommended solution is to just read in a loop until you have everything.

I had a bug like that today . A system showed 404, but about 50% of the time. Turns out I had two vhosts with the same name, and it hit them roughly evenly 😃

Had a similar thing at work not long ago.

A newly deployed version of a component in our system was only partially working, and the failures seemed to be random. It’s a distributed system, so the error could be in many places. After reading the logs for a while I realized that only some messages were coming through (via a message queue) to this component, which made no sense. The old version (on a different server) had been stopped, I had verified it myself days earlier.

Turns out that the server with the old version had been rebooted in the meantime, therefore the old component had started running again, and was listening to the same message queue! So it was fairly random which one actually received each message in the queue 😂

Problem solved by stopping the old container again and removing it completely so it wouldn’t start again at the next boot.